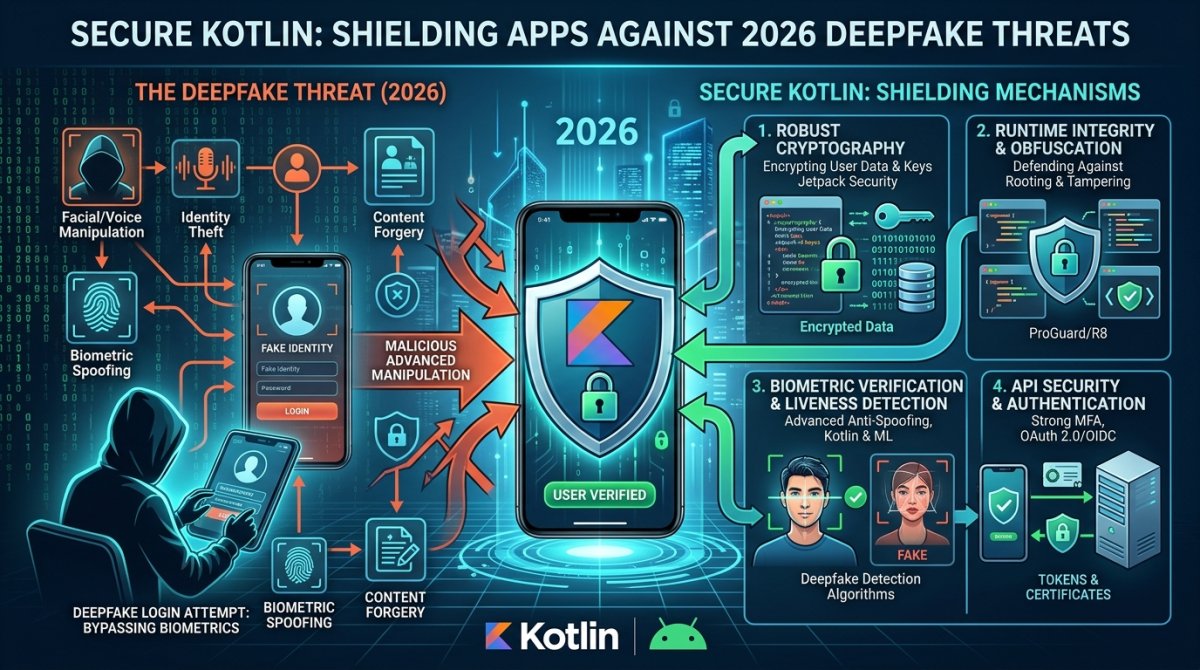

Secure Kotlin: Shielding Apps Against 2026 Deepfake Threats

Secure Kotlin: Shielding Apps Against 2026 Deepfake Threats, covering tactical analysis, future trends, and expert insights for 2026.

Secure Kotlin: Shielding Apps Against 2026 Deepfake Threats

As artificial intelligence moves from generating simple images to creating highly convincing, real-time video and audio, Deepfake threats have become a top-tier security concern in 2026. For developers building on Kotlin, whether for Android or Kotlin Multiplatform, implementing a "Zero Trust" architecture for media and identity is no longer optional.

1. Real-Time Liveness Detection

In 2026, static photo verification is easily bypassed by AI-generated avatars. Secure Kotlin apps must now implement Active Liveness Detection.

-

Challenge-Response Logic: Use Kotlin to programmatically challenge users to perform random actions—like blinking, turning their head, or saying a specific "nonce" (number used once)—during the KYC or login process.

-

Biometric Prompt API: Integrate the latest Android BiometricPrompt API, which in 2026 includes enhanced spoof-detection for 3D face mapping and sub-dermal pulse detection to ensure the subject is a living human.

2. Media Provenance and Metadata Verification

Deepfakes often lack the cryptographic "fingerprint" of a real camera sensor.

-

CameraX Security: When capturing media within your app, use the CameraX library to sign the image/video metadata at the moment of capture.

-

Hardware-Backed Keystores: Use Kotlin to store digital signatures in the device’s Trusted Execution Environment (TEE). This ensures that any video uploaded to your platform can be verified as "captured-on-device" rather than injected via a virtual camera or a deepfake bot.

3. Guarding Against Virtual Camera Injection

A common vector for deepfake attacks is the use of "Virtual Camera" apps that trick your application into accepting a fake video stream as a live feed.

-

Device Attestation: Utilize the Play Integrity API (or its 2026 equivalent) in your Kotlin code to check the integrity of the device. If the device is rooted or running a known "hooking" framework like Xposed, your app should automatically disable high-security features.

-

Input Validation: Implement strict checks to ensure the

CameraDevicebeing used is a physical, hardware-integrated unit and not a software-emulated peripheral.

4. Cryptographic Watermarking

As a video editor and web developer, you know that metadata can be stripped. Cryptographic watermarking embeds the verification data into the pixels themselves.

-

Pixel-Level Integrity: Use Kotlin's high-performance image processing libraries to embed invisible watermarks. If a deepfake AI attempts to alter the face in the video, the mathematical structure of the watermark breaks, instantly flagging the media as "Tampered."

5. The Developer’s Security Checklist for 2026

| Security Layer | Kotlin Implementation | Purpose |

| Identity | BiometricPrompt + CryptoObject | Prevents unauthorized AI-access |

| Network | Certificate Pinning | Stops "Man-in-the-Middle" AI-injection |

| Media | Hardware-signed Metadata | Proves the source of the video |

| Integrity | Play Integrity / Device Check | Blocks virtual camera software |

The Bottom Line

Protecting your users in 2026 requires a shift from "validating the data" to "validating the source." By leveraging Kotlin’s robust type-safety and deep integration with hardware security modules, you can ensure that NxTrendZ and your client projects remain resilient against the rising tide of AI-driven impersonation.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Wow

0

Wow

0

Sad

0

Sad

0

Angry

0

Angry

0

Comments (0)